Store and Process CSV Files in the Background Using Laravel Queues

Handling CSV file uploads is a common feature in many web applications, especially dashboards and admin panels. However, processing large CSV files during the request lifecycle can slow down your app. Instead, we can offload the heavy lifting to a background job using Laravel Queues.

In this article, you'll learn how to:

- ✅ Upload and store a CSV file

- ✅ Dispatch a job to process the file asynchronously

- ✅ Save the data into a database

Prerequisites

- Laravel 10+

- Queue configured (e.g. database, redis, sqs)

- A simple database table to insert records

Set Up the Database

Let’s assume you’re importing users. First, create a migration:

php artisan make:migration create_users_table

Define the schema in the migration and run it:

php artisan migrate

File Upload Form

Create a simple Blade file:

<!-- resources/views/upload.blade.php --> <form action="{{ route('csv.upload') }}" method="POST" enctype="multipart/form-data"> @csrf <input type="file" name="csv" accept=".csv" required> <button type="submit">Upload</button> </form>

Routes and Controller

Create a controller:

php artisan make:controller CsvUploadController

Inside the controller, handle the file upload and dispatch a job.

Define the routes:

// routes/web.php Route::get('/upload', function () { return view('upload'); }); Route::post('/upload', [CsvUploadController::class, 'upload'])->name('csv.upload');

Create the Job to Process CSV

Generate a job class:

php artisan make:job ProcessCsv

In the handle() method, process the CSV and insert data into the database.

Set Up Queue

If you're using the database driver, update .env:

QUEUE_CONNECTION=database

Create the queue tables:

php artisan queue:table php artisan migrate

Run the queue worker:

php artisan queue:work

Example CSV Format

Ensure your CSV follows a simple structure like:

name,email John Doe,[email protected] Jane Doe,[email protected]

Step 6: Add Progress Tracking

Create a new table to track each CSV upload:

php artisan make:migration create_csv_uploads_table

In the migration file, include fields like:

$table->id(); $table->string('filename'); $table->enum('status', ['pending', 'processing', 'completed', 'failed'])->default('pending'); $table->timestamps();

Run the migration:

php artisan migrate

In your controller, create a tracking record when uploading:

$upload = CsvUpload::create([ 'filename' => $request->file('csv')->getClientOriginalName(), 'status' => 'pending', ]);

Step 7: Update the Job for Safe Execution

In the ProcessCsv job:

namespace App\Jobs;

use App\Models\CsvUpload;

use Illuminate\Bus\Queueable;

use Illuminate\Support\Facades\Log;

use Illuminate\Contracts\Queue\ShouldQueue;

use Illuminate\Foundation\Bus\Dispatchable;

use Illuminate\Queue\InteractsWithQueue;

use Illuminate\Queue\SerializesModels;

use Illuminate\Support\Facades\DB;

class ProcessCsv implements ShouldQueue

{

use Dispatchable, InteractsWithQueue, Queueable, SerializesModels;

protected $uploadId;

public function __construct(int $uploadId)

{

$this->uploadId = $uploadId;

}

public function handle()

{

$upload = CsvUpload::find($this->uploadId);

if (!$upload) {

Log::error("CSV upload record not found for ID {$this->uploadId}");

return;

}

$upload->update(['status' => 'processing']);

try {

$path = storage_path("app/uploads/{$upload->filename}");

$file = fopen($path, 'r');

// Skip header

$header = fgetcsv($file);

DB::beginTransaction();

foreach ($this->readCsvInChunks($path) as $chunk) {

DB::table('users')->insert($chunk); // bulk insert

}

DB::commit();

fclose($file);

$upload->update(['status' => 'completed']);

} catch (\Throwable $e) {

DB::rollBack();

Log::error("Failed processing CSV ID {$this->uploadId}: " . $e->getMessage());

$upload->update(['status' => 'failed']);

// Optionally rethrow if you want failed() to be called

throw $e;

}

}

public function failed(\Throwable $exception)

{

// Additional fallback logic if the job fails (e.g. email admins)

Log::error("Job failed for upload ID {$this->uploadId}: " . $exception->getMessage());

CsvUpload::where('id', $this->uploadId)->update([

'status' => 'failed',

]);

}

protected function readCsvInChunks(string $filePath, int $chunkSize = 500): \Generator

{

$handle = fopen($filePath, 'r');

if (!$handle) {

throw new \Exception("Unable to open CSV file at $filePath");

}

$header = fgetcsv($handle); // Read the header

$chunk = [];

while (($row = fgetcsv($handle)) !== false) {

$chunk[] = [

'name' => $row[0],

'email' => $row[1],

'created_at' => now(),

'updated_at' => now(),

];

if (count($chunk) >= $chunkSize) {

yield $chunk;

$chunk = []; // reset

}

}

if (!empty($chunk)) {

yield $chunk; // yield remaining rows

}

fclose($handle);

}

}Notes

- Store uploaded files in a known path (storage/app/uploads/)

- Call this job with:

ProcessCsv::dispatch($csvUpload->id);

Make sure your queue worker is running

Conclusion

Processing CSV files in the background using Laravel Queues ensures your application stays responsive and scalable. This technique is ideal for bulk imports and large data operations.

Comments

Great Tools for Developers

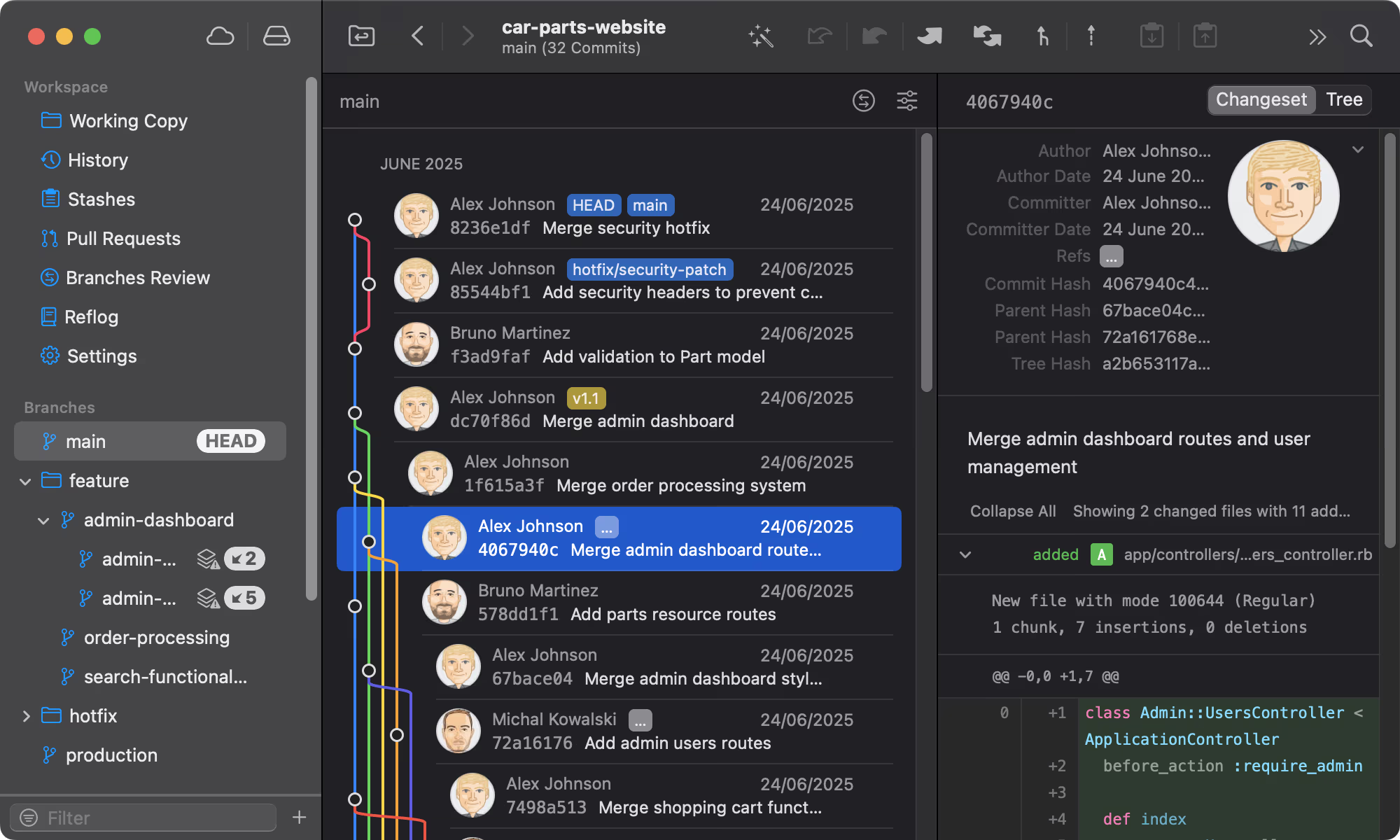

Git tower

A powerful Git client for Mac and Windows that simplifies version control.

Mailcoach's

Self-hosted email marketing platform for sending newsletters and automated emails.

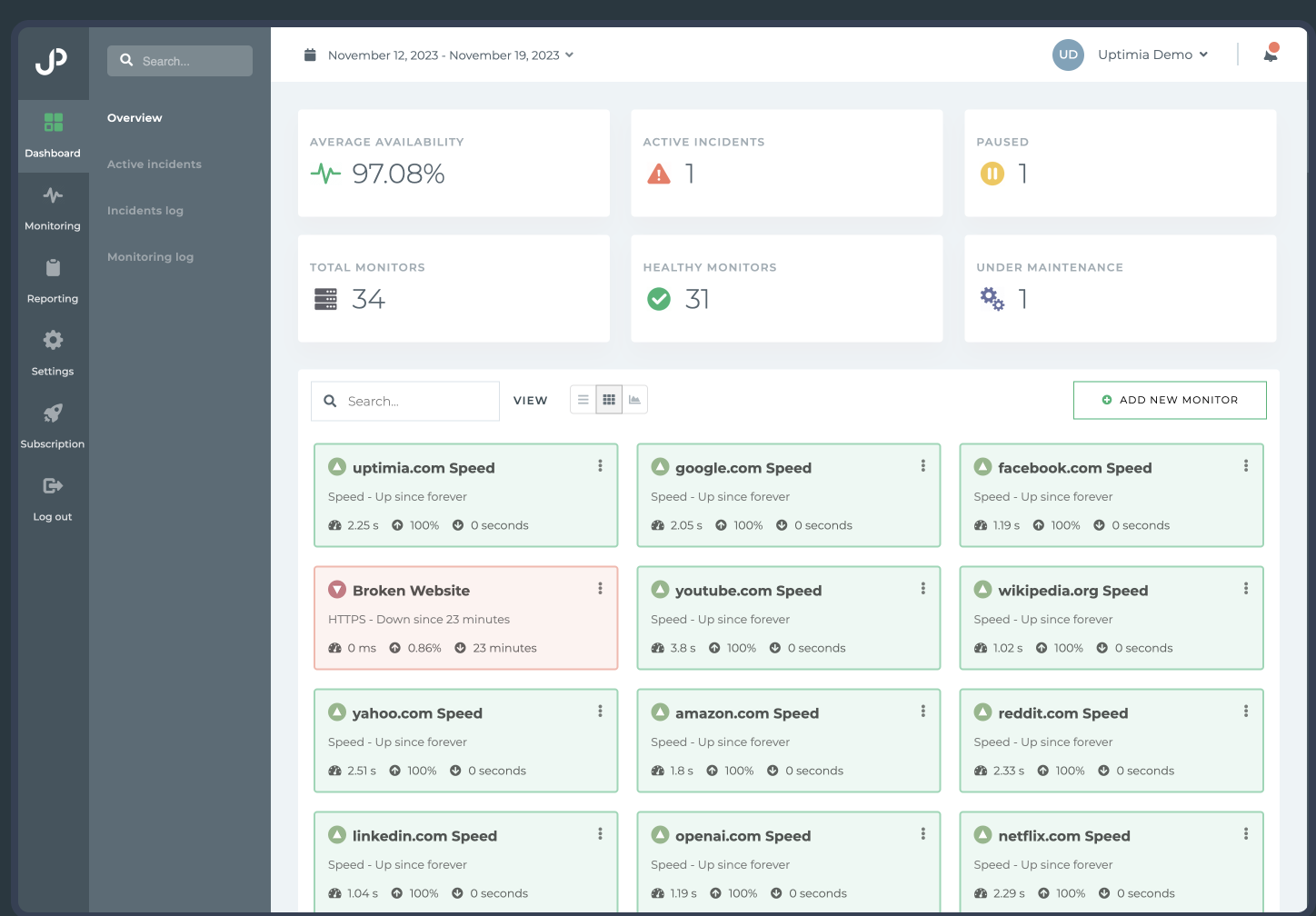

Uptimia

Website monitoring and performance testing tool to ensure your site is always up and running.

Please login to leave a comment.